Be the first to know about our next DPE Summit! Sign up to receive event announcements.

What to expect:

50+ speakers

30+ sessions

600+ attendees

The ultimate event for Developer Productivity Engineering and Developer Experience. Join engineering experts and innovators as we come together to redefine what's possible in the age of AI.

Last year's highlights

Check out the DPE Summit 2025 session videos to get a preview of what to expect this year.

You can write code faster. Can you deliver it faster?

Generative AI is redefining how code gets written—faster, more frequently, and at greater scale. But your delivery pipeline wasn’t built for this. The more code GenAI produces upstream, the more pressure it puts on downstream systems: build, test, compliance, and deployment all become bottlenecks. Without significant change, GenAI won’t accelerate delivery—it’ll break it. This talk explores how GenAI is increasing code volume, reducing comprehension, and encouraging large, high-risk batches that overwhelm even mature CI/CD systems. The result? Slower feedback loops, more failures, and mounting friction between experimentation and enterprise delivery. To break this cycle, pipelines must significantly improve their performance, troubleshooting efficiency, and developer experience. A “GenAI-ready” pipeline must handle significantly more throughput without compromising quality or incurring unsustainable cost. This isn’t just a matter of scaling infrastructure. It demands smarter pipelines, with improved failure troubleshooting, intelligent parallelization, predictive test orchestration, universal caching, and policy automation working in concert to eliminate wasted cycles, both in terms of compute power and developer productivity. Crucially, these capabilities must shift left into developers’ local environments where observability, fast feedback, and root-cause insights can stop incorrect, insecure, and unverified code before CI even begins. The future of delivery isn’t just faster. It’s smarter, leaner, and built to scale with GenAI.

Watch the video

Beyond the Commit: A Fireside Chat on AI and Developer Productivity

Join Brian Houck (Microsoft) and Nachiappan Nagappan (Meta)—two of the most influential voices in Developer Productivity—for a rare and insightful fireside chat. Drawing on decades of research and industry experience, Brian and Nachi will explore the evolving landscape of Developer Productivity metrics, the transformative role of AI across the entire software development lifecycle (far beyond code generation), and where they would invest—with no budget constraints—to drive the next wave of innovation in Developer Productivity Engineering in the era of generative AI.

Watch the video

Optimizing for Time: Dark Matter

Much of the work in the productivity space is focused on build speed or tooling improvements. Although beneficial, there is a larger opportunity available: Non-coding time. Standup updates, XFN alignment, chat, email, task management. All of these constitute the “Dark Matter” overhead that engineers have to deal with on a daily basis. We’ll dive into how Meta measures time, many of Meta’s top-line velocity metrics, and even some insights you can implement today, in order to help your engineers be more productive.

Watch the video

Salesforce’s AI journey for Developer Productivity: From Single Tool to Multi-Agent Development Ecosystem

Salesforce’s AI journey for developer productivity began in early 2023 with a single tool that demonstrated 30+ minutes of daily time savings. The journey accelerated through 2024-2025 with the introduction of Model Context Protocol (MCP) exchanges, AI Rules systems, and specialized tools for code reviews and ambient task agents for automated testing. Today’s ecosystem features multi-tool and multi-agent experience for Developers. This transformation represents a shift from single-tool adoption to an orchestrated multi-agent development environment that amplifies human creativity while maintaining enterprise security and compliance standards.

Watch the video

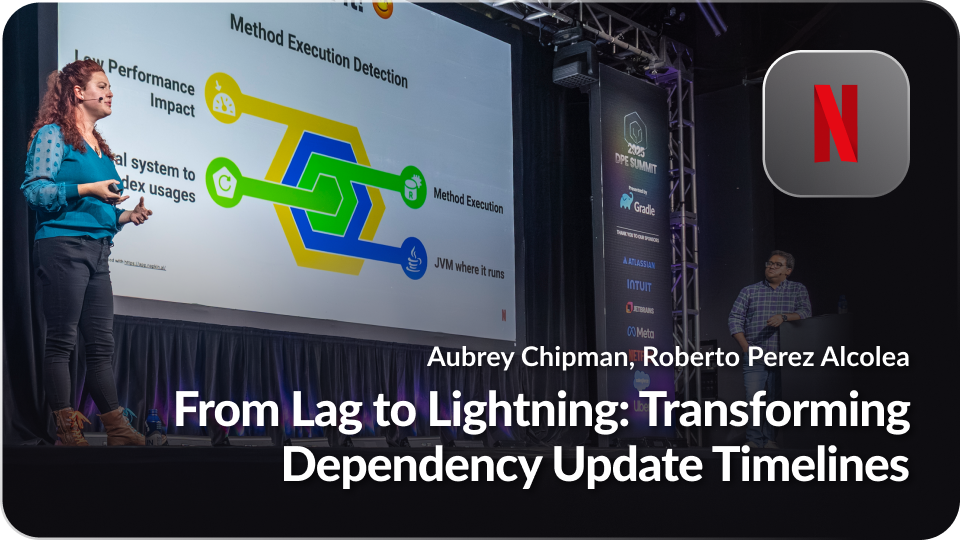

From Lag to Lightning: Transforming Dependency Update Timelines

Discover how we transformed our dependency update process that led to the latest dependency versions undergoing zero-touch deployment in hours (rather than days or weeks) across thousands of repositories. This talk will highlight our innovative use of dependency management resolution rules, automated SCM changes, and tracking them with post-deployment artifact observability tools, showcasing the efficiency and speed achieved. We’ll also touch on future advancements, including language-agnostic tooling and proactive security measures, offering insights into maintaining robust and secure software delivery.

Watch the video

The Critical Role of Troubleshooting in an ML-based development process

This talk explores the critical role of troubleshooting in modern, ML-driven software development. We first look into traditional DORA metrics and their valuable insights. Nevertheless, their inherent lag and delayed feedback loops present challenges for effective optimization. The presentation introduces “Local DORA” metrics—such as Time To Restore (TTR) a local or a Pull Request failing build— as more actionable proxies that provide immediate feedback, enabling organizations to react swiftly to issues. In particular, optimizing local TTR is paramount for accelerating development speed. The talk will then address the dual impact of AI/ML on troubleshooting: while ML-generated code can complicate debugging issues, AI tools, such as those in Develocity, can significantly enhance troubleshooting capabilities, shorten feedback loops, and therefore improve development efficiency.

Watch the video

Frequently

Asked Questions

-

Can I apply to speak at DPE Summit 2026?Please email events@gradle.com to inquire about speaking opportunities.

-

Who should attend DPE Summit 2026?Developers, engineering leaders, platform teams, and anyone focused on improving developer productivity and experience should attend.

-

Can you send me a Visa Invitation Letter?Please email events@gradle.com to request a Visa Invitation Letter for DPE Summit 2026.

-

Will food be provided?We will be providing breakfast and lunch as well as snacks throughout the day.

-

Will sessions be recorded?Sessions will be recorded and posted on dpe.org in the weeks after DPE Summit… but sessions are much better in person!

-

Will there be time to meet the speakers and network with other attendees?Yes! We have breaks and a happy hour scheduled in to allow time for networking with attendees and speakers.

-

Can my company have a booth at the event?Please email events@gradle.com to learn more about DPE Summit sponsorship opportunities.

-

Do you have a ticket cancellation/refund policy?Please email events@gradle.com with any ticket cancellations or ticket transfer requests.